Trace (linear algebra)

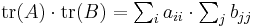

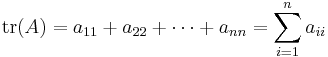

In linear algebra, the trace of an n-by-n square matrix A is defined to be the sum of the elements on the main diagonal (the diagonal from the upper left to the lower right) of A, i.e.,

where aii represents the entry on the ith row and ith column of A. The trace of a matrix is the sum of the (complex) eigenvalues, and it is an invariant with respect to a change of basis. This characterization can be used to define the trace for a linear operator in general. Note that the trace is only defined for a square matrix (i.e. n×n).

Geometrically, the trace can be interpreted as the infinitesimal change in volume (as the derivative of the determinant), which is made precise in Jacobi's formula.

The term trace is a calque from the German Spur (cognate with the English spoor), which, as a function in mathematics, is often abbreviated to "Sp".

Contents |

Example

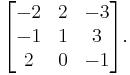

Let T be a linear operator represented by the matrix

Then tr(T) = −2 + 1 − 1 = −2.

The trace of the identity matrix is the dimension of the space; this leads to generalizations of dimension using trace. The trace of a projection (i.e., P2 = P) is the rank of the projection. The trace of a nilpotent matrix is zero. The product of a symmetric matrix and a skew-symmetric matrix has zero trace.

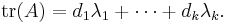

More generally, if f(x) = (x − λ1)d1···(x − λk)dk is the characteristic polynomial of a matrix A, then

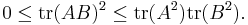

If A and B are positive semi-definite matrices of the same order then

Properties

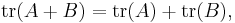

The trace is a linear map. That is,

for all square matrices A and B, and all scalars c.

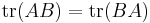

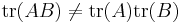

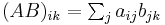

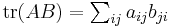

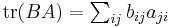

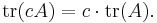

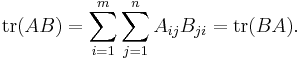

If A is an m×n matrix and B is an n×m matrix, then

Conversely, the above properties characterize the trace completely in the sense as follows. Let  be a linear functional on the space of square matrices satisfying

be a linear functional on the space of square matrices satisfying  . Then

. Then  and tr are proportional.[3]

and tr are proportional.[3]

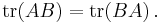

The trace is similarity-invariant, which means that A and P−1AP have the same trace. This is because

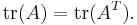

A matrix and its transpose have the same trace:

Let A be a symmetric matrix, and B an anti-symmetric matrix. Then

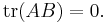

When both A and B are n by n, the trace of the (ring-theoretic) commutator of A and B vanishes: tr([A, B]) = 0; one can state this as "the trace is a map of Lie algebras  from operators to scalars", as the commutator of scalars is trivial (it is an abelian Lie algebra). In particular, using similarity invariance, it follows that the identity matrix is never similar to the commutator of any pair of matrices.

from operators to scalars", as the commutator of scalars is trivial (it is an abelian Lie algebra). In particular, using similarity invariance, it follows that the identity matrix is never similar to the commutator of any pair of matrices.

Conversely, any square matrix with zero trace is the commutator of some pair of matrices.[4] Moreover, any square matrix with zero trace is unitarily equivalent to a square matrix with diagonal consisting of all zeros.

The trace of any power of a nilpotent matrix is zero. When the characteristic of the base field is zero, the converse also holds: if  for all

for all  , then

, then  is nilpotent.

is nilpotent.

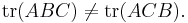

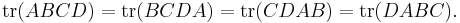

Note that order does matter in taking traces: in general,

In other words, we can only interchange the two halves of the expression, albeit repeatedly. This means that the trace is invariant under cyclic permutations, i.e.,

However, if products of three symmetric matrices are considered, any permutation is allowed. (Proof: tr(ABC) = tr(AT BT CT) = tr((CBA)T) = tr(CBA).) For more than three factors this is not true. This is known as the cyclic property.

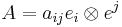

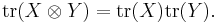

Unlike the determinant, the trace of the product is not the product of traces. What is true is that the trace of the tensor product of two matrices is the product of their traces:

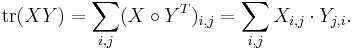

The trace of a product can be rewritten as the sum of all elements from a Hadamard product (entry-wise product):

This should be more computationally efficient, since the matrix product of an  matrix with an

matrix with an  one (first and last dimensions must match to give a square matrix for the trace) has

one (first and last dimensions must match to give a square matrix for the trace) has  multiplications and

multiplications and  additions, whereas the computation of the Hadamard version (entry-wise product) requires only

additions, whereas the computation of the Hadamard version (entry-wise product) requires only  multiplications followed by

multiplications followed by  additions.

additions.

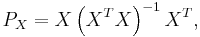

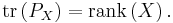

- The trace of a projection matrix is the dimension of the target space. If

-

- then

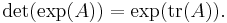

Exponential trace

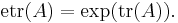

Expressions like exp(tr(A)), where A is a square matrix, occur so often in some fields (e.g. multivariate statistical theory), that a shorthand notation has become common:

This is sometimes referred to as the exponential trace function.

Trace of a linear operator

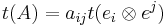

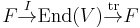

Given some linear map f : V → V (V is a finite-dimensional vector space) generally, we can define the trace of this map by considering the trace of matrix representation of f, that is, choosing a basis for V and describing f as a matrix relative to this basis, and taking the trace of this square matrix. The result will not depend on the basis chosen, since different bases will give rise to similar matrices, allowing for the possibility of a basis-independent definition for the trace of a linear map.

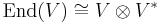

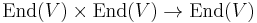

Such a definition can be given using the canonical isomorphism between the space End(V) of linear maps on V and V⊗V*, where V* is the dual space of V. Let v be in V and let f be in V*. Then the trace of the decomposable element v⊗f is defined to be f(v); the trace of a general element is defined by linearity. Using an explicit basis for V and the corresponding dual basis for V*, one can show that this gives the same definition of the trace as given above.

Eigenvalue relationships

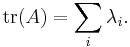

If A is a square n-by-n matrix with real or complex entries and if λ1,...,λn are the eigenvalues of A (listed according to their algebraic multiplicities), then

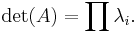

This follows from the fact that A is always similar to its Jordan form, an upper triangular matrix having λ1,...,λn on the main diagonal. In contrast, the determinant of  is the product of its eigenvalues; i.e.,

is the product of its eigenvalues; i.e.,

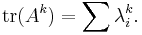

More generally,

Derivatives

The trace is the derivative of the determinant: it is the Lie algebra analog of the (Lie group) map of the determinant. This is made precise in Jacobi's formula for the derivative of the determinant (see under determinant). As a particular case,  : the trace is the derivative of the determinant at the identity. From this (or from the connection between the trace and the eigenvalues), one can derive a connection between the trace function, the exponential map between a Lie algebra and its Lie group (or concretely, the matrix exponential function), and the determinant:

: the trace is the derivative of the determinant at the identity. From this (or from the connection between the trace and the eigenvalues), one can derive a connection between the trace function, the exponential map between a Lie algebra and its Lie group (or concretely, the matrix exponential function), and the determinant:

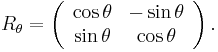

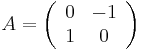

For example, consider the one-parameter family of linear transformations given by rotation through angle θ,

These transformations all have determinant 1, so they preserve area. The derivative of this family at θ = 0 is the antisymmetric matrix

which clearly has trace zero, indicating that this matrix represents an infinitesimal transformation which preserves area.

A related characterization of the trace applies to linear vector fields. Given a matrix A, define a vector field F on Rn by F(x) = Ax. The components of this vector field are linear functions (given by the rows of A). The divergence div F is a constant function, whose value is equal to tr(A). By the divergence theorem, one can interpret this in terms of flows: if F(x) represents the velocity of a fluid at the location x, and U is a region in Rn, the net flow of the fluid out of U is given by tr(A)· vol(U), where vol(U) is the volume of U.

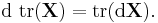

The trace is a linear operator, hence it commutes with the derivative:

Applications

The trace is used to define characters of group representations. Two representations  of a group

of a group  are equivalent (up to change of basis on

are equivalent (up to change of basis on  ) if

) if  for all

for all  .

.

The trace also plays a central role in the distribution of quadratic forms.

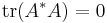

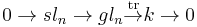

Lie algebra

The trace is a map of Lie algebras  from the Lie algebra gln of operators on a n-dimensional space (

from the Lie algebra gln of operators on a n-dimensional space ( matrices) to the Lie algebra k of scalars; as k is abelian (the Lie bracket vanishes), the fact that this is a map of Lie algebras is exactly the statement that the trace of a bracket vanishes:

matrices) to the Lie algebra k of scalars; as k is abelian (the Lie bracket vanishes), the fact that this is a map of Lie algebras is exactly the statement that the trace of a bracket vanishes: ![\operatorname{tr}([A,B])=0.](/2012-wikipedia_en_all_nopic_01_2012/I/57bffcc649512ed0563227c0c248ac4b.png)

The kernel of this map, a matrix whose trace is zero, is often said to be traceless or tracefree, and these matrices form the simple Lie algebra sln, which is the Lie algebra of the special linear group of matrices with determinant 1. The special linear group consists of the matrices which do not change volume, while the special linear algebra is the matrices which infinitesimally do not change volume.

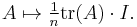

In fact, there is a internal direct sum decomposition  of operators/matrices into traceless operators/matrices and scalars operators/matrices. The projection map onto scalar operators can be expressed in terms of the trace, concretely as:

of operators/matrices into traceless operators/matrices and scalars operators/matrices. The projection map onto scalar operators can be expressed in terms of the trace, concretely as:

Formally, one can compose the trace (the counit map) with the unit map  of "inclusion of scalars" to obtain a map

of "inclusion of scalars" to obtain a map  mapping onto scalars, and multiplying by n. Dividing by n makes this a projection, yielding the formula above.

mapping onto scalars, and multiplying by n. Dividing by n makes this a projection, yielding the formula above.

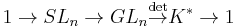

In terms of short exact sequences, one has

which is analogous to

for Lie groups. However, the trace splits naturally (via  times scalars) so

times scalars) so  but the splitting of the determinant would be as the nth root times scalars, and this does not in general define a function, so the determinant does not split and the general linear group does not decompose:

but the splitting of the determinant would be as the nth root times scalars, and this does not in general define a function, so the determinant does not split and the general linear group does not decompose:

Bilinear forms

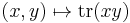

The bilinear form

is called the Killing form, which is used for the classification of Lie algebras.

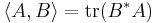

The trace defines a bilinear form:

(x, y square matrices).

The form is symmetric, non-degenerate[5] and associative in the sense that:

In a simple Lie algebra (e.g.,  ), every such bilinear form is proportional to each other; in particular, to the Killing form.

), every such bilinear form is proportional to each other; in particular, to the Killing form.

Two matrices x and y are said to be trace orthogonal if

Inner product

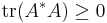

For an m-by-n matrix A with complex (or real) entries and * being the conjugate transpose, we have

with equality if and only if A = 0. The assignment

yields an inner product on the space of all complex (or real) m-by-n matrices.

The norm induced by the above inner product is called the Frobenius norm. Indeed it is simply the Euclidean norm if the matrix is considered as a vector of length mn.

Generalization

The concept of trace of a matrix is generalised to the trace class of compact operators on Hilbert spaces, and the analog of the Frobenius norm is called the Hilbert–Schmidt norm.

The partial trace is another generalization of the trace that is operator-valued.

If A is a general associative algebra over a field k, then a trace on A is often defined to be any map tr: A → k which vanishes on commutators: tr([a, b]) = 0 for all a, b in A. Such a trace is not uniquely defined; it can always at least be modified by multiplication by a nonzero scalar.

A supertrace is the generalization of a trace to the setting of superalgebras.

The operation of tensor contraction generalizes the trace to arbitrary tensors.

Coordinate-free definition

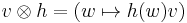

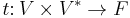

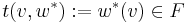

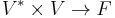

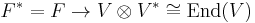

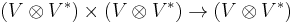

We can identify the space of linear operators on a vector space  with the space

with the space  , where

, where  . We also have a canonical bilinear function

. We also have a canonical bilinear function  that consists of applying an element

that consists of applying an element  of

of  to an element

to an element  of

of  to get an element of

to get an element of  , in symbols

, in symbols  . This induces a linear function on the tensor product (by its universal property)

. This induces a linear function on the tensor product (by its universal property)  which, as it turns out, when that tensor product is viewed as the space of operators, is equal to the trace.

which, as it turns out, when that tensor product is viewed as the space of operators, is equal to the trace.

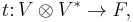

This also clarifies why  and why

and why  , as composition of operators (multiplication of matrices) and trace can be interpreted as the same pairing. Viewing

, as composition of operators (multiplication of matrices) and trace can be interpreted as the same pairing. Viewing  , one may interpret the composition map

, one may interpret the composition map  as

as

coming from the pairing  on the middle terms. Taking the trace of the product then comes from pairing on the outer terms, while taking the product in the opposite order and then taking the trace just switches which pairing is applied first. On the other hand, taking the trace of

on the middle terms. Taking the trace of the product then comes from pairing on the outer terms, while taking the product in the opposite order and then taking the trace just switches which pairing is applied first. On the other hand, taking the trace of  and the trace of

and the trace of  corresponds to applying the pairing on the left terms and on the right terms (rather than on inner and outer), and is thus different.

corresponds to applying the pairing on the left terms and on the right terms (rather than on inner and outer), and is thus different.

In coordinates, this corresponds to indexes: multiplication is given by  , so

, so  and

and  which is the same, while

which is the same, while  , which is different.

, which is different.

For  finite-dimensional, with basis

finite-dimensional, with basis  and dual basis

and dual basis  , then

, then  is the

is the  entry of the matrix of the operator with respect to that basis. Any operator

entry of the matrix of the operator with respect to that basis. Any operator  is therefore a sum of the form

is therefore a sum of the form  . With

. With  defined as above,

defined as above,  . The latter, however, is just the Kronecker delta, being 1 if i=j and 0 otherwise. This shows that

. The latter, however, is just the Kronecker delta, being 1 if i=j and 0 otherwise. This shows that  is simply the sum of the coefficients along the diagonal. This method, however, makes coordinate invariance an immediate consequence of the definition.

is simply the sum of the coefficients along the diagonal. This method, however, makes coordinate invariance an immediate consequence of the definition.

Dual

Further, one may dualize this map, obtaining a map  . This map is precisely the inclusion of scalars, sending

. This map is precisely the inclusion of scalars, sending  to the identity matrix: "trace is dual to scalars". In the language of bialgebras, scalars are the unit, while trace is the counit.

to the identity matrix: "trace is dual to scalars". In the language of bialgebras, scalars are the unit, while trace is the counit.

One can then compose these,  , which yields multiplication by

, which yields multiplication by  , as the trace of the identity is the dimension of the vector space.

, as the trace of the identity is the dimension of the vector space.

See also

- Trace class

- Field trace

- Golden–Thompson inequality

- Characteristic function

- Specht's theorem

- von Neumann's trace inequality

Notes

- ^ Can be proven with the Cauchy–Schwarz inequality.

- ^ This is immediate from the definition of matrix multiplication.

- ^ Proof:

if and only if

if and only if  and

and  (with the standard basis

(with the standard basis  ),

),

as tr(AB)=tr(BA) (equivalently,

as tr(AB)=tr(BA) (equivalently, ![\operatorname{tr}([A,B]) = 0](/2012-wikipedia_en_all_nopic_01_2012/I/e5db539d4baefcea8a8cd3ee2723135b.png) ) defines the trace on sln, which has complement the scalar matrices, and leaves one degree of freedom: any such map is determined by its value on scalars, which is one scalar parameter and hence all are multiple of the trace, a non-zero such map.

) defines the trace on sln, which has complement the scalar matrices, and leaves one degree of freedom: any such map is determined by its value on scalars, which is one scalar parameter and hence all are multiple of the trace, a non-zero such map. - ^ Proof:

is a semisimple Lie algebra and thus every element in it is the commutator of some pair of elements, otherwise the derived algebra would be a proper ideal.

is a semisimple Lie algebra and thus every element in it is the commutator of some pair of elements, otherwise the derived algebra would be a proper ideal. - ^ This follows from the fact that

if and only if

if and only if

![B(x, y) = \operatorname{tr}(\operatorname{ad}(x)\operatorname{ad}(y))\text{ where }\operatorname{ad}(x)y = [x, y] = xy - yx](/2012-wikipedia_en_all_nopic_01_2012/I/4ae13d3181a0b801734b453b83a8cc21.png)

![\operatorname{tr}(x[y, z]) = \operatorname{tr}([x, y]z). \,](/2012-wikipedia_en_all_nopic_01_2012/I/cb0283f6961f0dcf25bbf8d2a916d02f.png)

![f(A) = \sum_{i, j} [A]_{ij} f(e_{ij}) = \sum_i [A]_{ii} f(e_{11}) = f(e_{11}) \mathrm{tr}(A).](/2012-wikipedia_en_all_nopic_01_2012/I/92112743ead72a0989f519ce14281d00.png)